Can creatives use AI?

Or does any involvement result in instant soul-death?

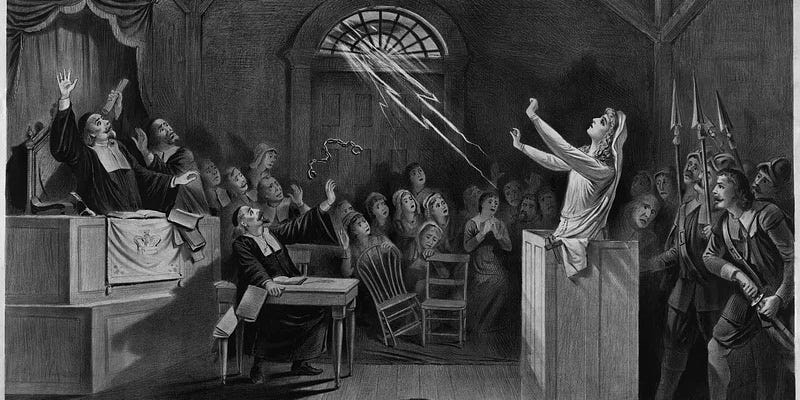

Are you now, or have you ever been, using AI in connection with your creative practice?!

It feels like AI is the new witch hunt - the McCarthyism of our generation.

If this post gets cut off in your email, view it in full here.

I’ve seen so many writers and artists complaining that people have left reviews or social media comments claiming that their work is “AI slop” when they swear they’ve never been anywhere near AI. It seems to be the latest way to attack someone, to claim they used a robot.

Mia Ballard’s career might well be over before it got going, now that allegations of AI use have had Shy Girl pulped. Ballard claims she didn’t use AI to write the book, but that, unbeknownst to her at the time, a friend she hired to edit it did use an AI tool in that process. Now, she might well be lying and the backlash might be completely deserved. But, if she’s telling the truth, she has either, at one end of the scale, made an error in judgement about editorial standards, or, at the other, been let down, even deceived, by a friend and has paid a huge price for it. (I also came across this piece by The Drey Dossier which calls into question the validity of the whole “investigation”…) Whatever the real story, it scares me that retribution can be so swift and decisive before anyone has even discovered the truth of the situation.

People are frantically putting up disclaimers that they do not use AI in any part of their process, and literary magazines and agents are stamping “no AI” all over their terms and conditions.

Meanwhile, the dude-bros still insist we can all make millions by generating an AI book a day. Which seems kind of unlikely when even properly written books don’t make that much, but there we go. My social media feed is awash with people insisting that this technology is the future, so we’d better get on board or get left behind.

Can we all just simmer down for a moment?!

I’m not remotely worried about being left behind. I don’t even use Scrivener. I only very reluctantly use a computer, and even then only to type up what I’ve already drafted. But, since I’m pretty confident that we had books before the introduction of the internet, I think I’ll manage to still produce them without whatever new gadget wanders along next. I’m happy to embrace my Luddite status, thank you.

But, and I want you to sit down and take a breath before you read this next part… I don’t actually think AI is a devil sent direct from the bowls of hell.

Shocking, I know.

AI is just a tool. It is, in essence, a very sophisticated search engine. It gives you much better, more detailed, and more tailored results than any Google query. It spits out its results in a cohesive whole, so you get a full answer in one place rather than having to piece an answer together from snippets. And it remembers what you asked before, so it can put a string of results together in a coherent narrative. Which is helpful.

There are plenty of downsides to it—don’t panic, I’m getting to those!—but it’s not actually anything that drastically different from what we had already. It’s Google on steroids, that’s all. The tech bros played Pimp My Search Bar, and this is what they came up with.

The reaction to AI sometimes feels a little disproportionate. There’s a major rift forming between the people who think AI is the future, and the people screaming that it must never be used EVEEEERRRRRR! Anyone trapped in the middle, meanwhile, is overwhelmed with a panicked impulse that they must pick a side. Because that’s what we do these days—we get our knickers in a twist about something, pick sides, then refuse to ever speak to people on the other side because they are the scum of the Earth.

It’s a little tiring.

This is nothing new.

I don’t just mean that AI is nothing new; the moral panic is, also, as old as time. Socrates was horrified when people started writing books down. He thought it would make people dumber if they didn’t have to remember stuff and could just look it up whenever they wanted. His faithful student, Plato, then wrote down all his teachings into books, which is how come any of us have heard of Socrates.

The introduction of the printing press caused outrage—the Catholic Church tried to ban it. Mass-produced books were considered to be of lower quality, more likely to be filled with errors, and more likely to spread seditious or destructive ideas. Sound familiar?

I called myself a Luddite earlier, but do you know where that term originated? It comes from the name adopted by a movement of English textile workers who destroyed machinery in protest at the introduction of the new technology.

Almost always, these pushbacks are fuelled by fear, and the AI-panic is no different. Of course we’re scared. AI can write a novel in a day—I’m still working on one I started seven years ago. It’s cheaper and faster than any human writer, no matter how much coffee we’ve consumed, so those of us who do any kind of freelance work are staring down the barrel of losing all our work. And clients are reducing their budgets because they know they can just threaten to “get ChatGPT to write it” and beat us down to a ridiculous price.

Not only that, but AI is actively stealing our work. AI cannot write or draw or create anything new itself. It can only scrape the internet for what’s already out there, and summarise it. That’s what it does with search results, and it’s what it does if you ask it to make a picture of a man on a horse. Should you wish to, for whatever purposes, I don’t judge. These “write a book in a day” AI fans are really just plagiarising other authors’ content and then selling it off. (Fun fact, though—you cannot copyright AI generated work. So you can just plagiarise the work that AI plagiarised and sell it yourself in revenge, if you were a truly petty individual and you have the time. I’m petty enough, for sure, but I lack the time.)

For these reasons and more, I do not blame anyone for being seriously pissed off about AI.

Is there a responsible way to use AI, then?

Anthony Horowitz is getting a bit of a bashing at the moment for admitting to using ChatGPT in his writing process, but I think he’s getting a raw deal. Ironically, there seems to be some reading comprehension lacking in the criticism of Horowitz’s comments. He uses AI in his process, not in his writing.

And this, I think, is the crux of the matter. We need to draw a clear distinction between AI-powered tools and generative AI.

AI is in pretty much everything. You think you’re deeply virtuous because you’re not using AI, but you almost certainly are and you just don’t know it.

Spellcheck uses AI. Your email system uses AI to filter spam and offer predictive text. Social media feeds are powered by AI algorithms. Your GPS uses AI. Netflix and Spotify use AI to generate recommendations. Your bank uses AI to scan for potential fraud. Online shopping uses AI. And so on. It’s fucking everywhere.

These kinds of uses are simply making what we already had more efficient.

Generative AI is different. That’s where you’re using a machine to write your book, or your blog, for you, or to make an image or a video.

That’s the shit that causes the real problem.

What about the damage to the planet?

The main reason people give for being snobby about AI is the environmental implications. So let’s look at that for a second.

The data centres used to power AI tools are immense, and consume insane amounts of water. This is creating shortages in communities near these centres.

Now, water is a cycle. We learned this at school. The water that the data centres use does, eventually, return to the environment. But it takes a while. And, while we’re waiting for the clouds to piss it back down onto us, the data centres are guzzling so much that we’re left with a deficit. Which is less than ideal.

Again, though, there’s a big difference between general AI usage and generative AI. Your standard text query uses barely any energy or water. If we were all just asking ChatGPT what flowers are good to plant in shade and how to stop a five-year-old throwing everything across the room (just me?!), then we’d be fine.

I find it mildly hilarious that so many people take to social media to moan about the environmental implications of AI. Posting on social media uses way more water than a ChatGPT query. Some of the biggest climate destroying actions we all take every day are:

Storing loads of photos and videos in the cloud (I, like you, need to delete a lot of crap off my phone)

Sending and storing a tonne of unnecessary emails (I deleted an entire inbox once—it felt amazing)

Streaming videos, movies and TV shows

Video calls

Posting photos and videos to social media

Online gaming

All of those are much worse than AI chats.

Generative AI is fucking the planet, though. That’s where the gallons of water get used, and why a cryogenically extended Jeff Bezos will be selling us fresh water at $100 a litre in 100 years if we’re not careful.

How to use AI like a responsible artist

Ultimately, I think there is a place for AI tools.

For someone like myself, who struggles with executive function and is overwhelmed by trying to run a business, write books and look after two neurodivergent children, it can be really helpful to have a super-sophisticated search engine to give me quick answers to complex questions.

We are, all, asking too much of ourselves. This world is asking too much of us. We’re expected to be 10 million things to 542 different people, and we’re stretched way beyond breaking point. So yeah, most of us could do with some admin help.

We do not need help making art, though. That is the one thing that can, and should, only be done by a creature with a soul. Generative AI hurts artists, steals their work and their livelihood, fucks the planet, and is just crap.

So my number 1 rule for responsible AI use is, generative AI can get in the bin.

What AI can help you with, though, is…

Research (Google’s AI tool was very helpful the other day in sifting through my search results about spaces in the walls and under the floors in Victorian houses… best not to ask)

How-to guides (like, for example, if you’ve been bashing your head against your desk for the last hour wondering why Mailchimp isn’t doing the thing you want it to do—there was a box I hadn’t ticked…)

Marketing plans (especially structuring the drip-feeding and pacing of content, and all the planning that requires brainpower that you left on the page)

Marketing ideas (it’s good at turning one concept into lots of different posts, so you can repurpose the same idea a lot—which you need to do or people won’t take it in—or turning a long sentence into a punchy few words you can whack on an Instagram slide)

Finding the right word (when there’s a word you want but you can’t quite get it and thesaurus.com isn’t doing it for you… )

Titles (I hate titles, but if I’m really stuck then I will sometimes get ChatGPT to suggest a bunch of options—I will discard all the ones it suggests, but it will help me work out what I actually want—but I will ONLY do this with non-fiction articles, not fiction, or even creative non-fiction)

The other side of the story (sometimes if I’m writing an opinion piece, I am very heavily invested in my opinion—I mean, that’s why I wanted to write the piece—but a good article gives the other side, if only to refute it; ChatGPT can tell me how other people might see the situation, so that I can then tell them why they’re wrong)

SEO (if you’re writing a Substack or blog post, or website copy, AI can optimise it for search results—I have never tried this, but I know a lot of people swear by it)

Do not, under any circumstances, feed fiction or art into an AI tool.

If you’re using AI for SEO or to help you come up with a title or any of that, it might not feel such a huge deal for you to feed a non-fiction article into the beast. You basically do that when you put it on the internet anyway—Google scrapes every page on the web. And if your piece is purely informational, it might not do as much harm. (Only you know what it would mean for your work, though.)

Fiction, or anything that has been summoned from the ether purely by your mind, is different. AI can’t get that anywhere else. But, once you give it to it, it can use it. It will steal it and regurgitate it and you’ll see it somewhere else spit up all over someone else’s work. Gross.

Keep your creative work out of AI’s metal paws, and don’t feed anyone else’s work to it either!

Tips for sustainable use

I had a chat with Sian Young from the Centre for Sustainable Action, and she helped me put together a list of ways to use AI in an ethical manner that minimises the environmental impact:

Disclose if, and how, AI has been used in your process

Anything AI produces must be checked by a human—do not trust it blindly

Keep prompts short and concise—staying on point reduces energy wastage

Define exactly what you want it to do and what you want it produce (not “can you give me some ideas on how to promote my book” but “provide a list of 10 bullet-point discussion points on X topic that I can share on Instagram”)

Encourage concise responses

Use a sustainable AI tool, such as GreenPT

Using it is one thing, but I’d also recommend thinking very carefully about where you put your money. I use the free ChatGPT plan occasionally, but I’ll damned if I’m putting any money into the pocket of its Trump-supporting CEO who thinks his tool is a more effective use of resources than actual humans.

AI is riddled with issues, no doubt. And so is most of the technology, and, for that matter, most of the processes and practices, that underpin our modern world. We don’t have to use it, but we should be wary about getting too willing to attack those who do. We don’t want a witch hunt on our hands, and we do need to recognise the difference between responsible use of supportive everyday technologies, and out-and-out plagiaristic cheating.

I don’t love AI, and I don’t think it’ll be the death of us. I’ve already written about how, in the long-term, AI slop might just prove the value of human content. I think these tools have their place, and we can’t keep piling on the requirements and asking for constant extensive productivity whilst not expecting people to reach for help. AI is a product of our toxic busyness culture, so it’s not surprising that it leaves a foul stench in the air. But, ultimately, it is just a tool. Whether it’s used for good or evil is down to the people operating it.

It’s up to me and you to be good humans.

Hey, don’t make art like a robot! If you’re a writer, artist or maker that has a human body, build a creative practice that suits your fluctuating mortal form, not one that demands machine-like consistency.

The Creative Fix Reset will walk you through a sustainable, nourishing creative habit that takes just 10 minutes at a time. It’s FREE, it’s on your own schedule, and you don’t even need to show up every day.

We start on 18th May. Are you in?

I love the way that you have written this article, like anything AI has its advantages and disadvantages. The main problem with a lot of these technologies and online advances, is that they happen before we can really assess and digest them. The problem is not the technology itself, it how we decide to use it and how we prepare for it totally reshaping our jobs and income at such a fast rate over the coming decades.

So refreshing to see a balanced perspective about AI. Although I diverge on a couple of minor points, you’ve very eloquently captured my own thoughts about AI and its place in our society.